Newsletter: join thousands of other people

Newsletter: join thousands of other people

Once a month we'll send you an email with news, research and thoughts, as well as training courses and free webinars you may wish to attend.

Our websites:

Posted by Paul Nisbet on the 7th March, 2018

At a Crick 'Ask the Expert' webinar on 6/3/18, Dr Abi James drew attention to research undertaken at Runshaw College, a sixth form and FE College in England, on the use of technology in examinations. The research has found that the majority of students preferred to use a computer reader compared to a human reader and that the use of the software had a positive impact upon attainment. The report contains many useful insights on the use of technology to support candidates with additional support needs, and is well worth reading.

When we first investigated Digital Question Papers in 2004/5, our logic went something like this:

We conducted trials and piloted the digital question papers (you can read the reports on the CALL Digital Assessments website), and the results seemed to support the hypothesis, and so SQA started offering Digital Question Papers in 2008.

However, just because something sounds sensible doesn't make it true, so this research report from Runshaw College is really helpful as it adds to the relatively sparse evidence base on the use of computer readers in examinations.

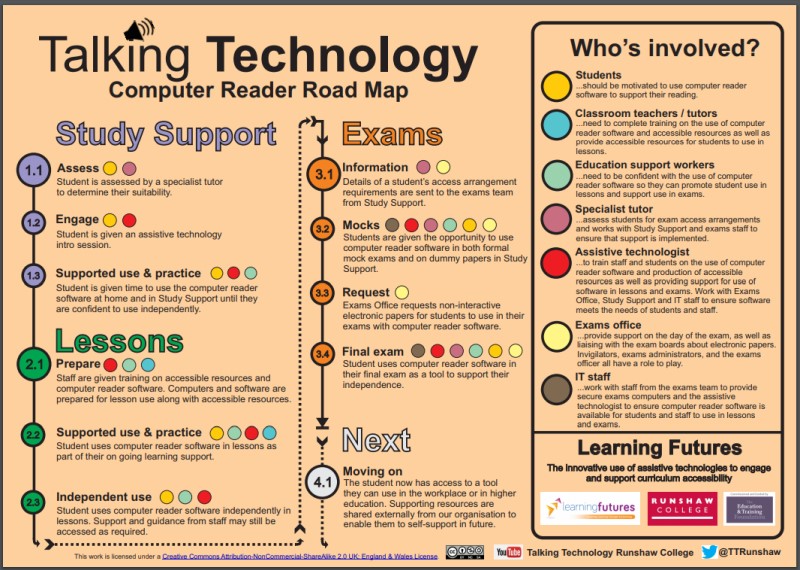

Following a successful pilot study of 17 students using reading software in exams, the College wanted to extend the scope to more students, and since the arrangements used in examinations should 'be the normal way of working', the project began by providing universal access to computer reading software (Orato, a free text reader); in conjunction with a programme of staff development and teaching for students; and support for students to access curriculum materials in accessible digital formats. Students were then offered the use of Orato as one of the options for access arrangements in the 2015 examinations. The team's Talking Technology Computer Reader Road Map provides a very helpful overview of this process:

The students who took part in the project had been assessed as eligible for the use of our human reader in examinations, i.e. the candidate has persistent and significant difficulties in accessing written text, is disabled under the terms of the Equality Act 2010, and achieves a below-average standardised score of 84 or less in relation to reading accuracy, comprehension or speed (Access Arrangements and Reasonable Adjustments, JCQ p.35).

The project team produced a really useful set of resources which would be very helpful for anyone thinking of introducing computer readers into schools, and particularly if you're thinking about examinations.

Once a month we'll send you an email with news, research and thoughts, as well as training courses and free webinars you may wish to attend.

Our social media sites - YouTube, Twitter and Facebook